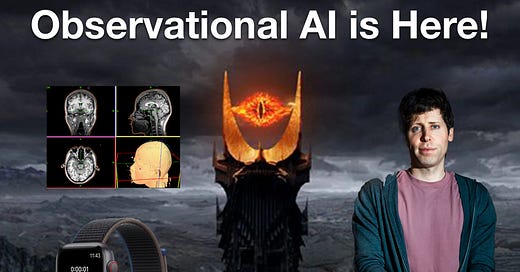

Is "Observational AI" the Next Big Thing?

Generative AI is flashy, but it's ability to observe may be more important

The Frontier Psychiatrists is a daily health-themed newsletter. Physician-author Owen Scott Muir writes it, and when he’s not writing this, he’s cranking away on AI-guided health-related research.